A value hypothesis is the core assumption your product rests on. It states who you're building for, what problem you're solving, and why someone would care enough to use what you built.

Get it wrong and everything downstream — your roadmap, your messaging, your go-to-market — is built on a faulty foundation.

Most teams skip this step. They move straight from idea to execution, treating the value assumption as settled when it hasn't been tested at all. The hypothesis only becomes visible when the product ships and nobody uses it.

What is a value hypothesis?

A value hypothesis is a testable statement that describes the value your product delivers to a specific customer. It comes from the lean startup methodology, where Eric Ries distinguished between two types of assumptions teams need to validate before scaling: value and growth.

The value hypothesis answers: does this product create value for the people it's meant to serve? It is not a mission statement or a marketing tagline. It is a falsifiable claim — one you can design experiments around and update based on evidence.

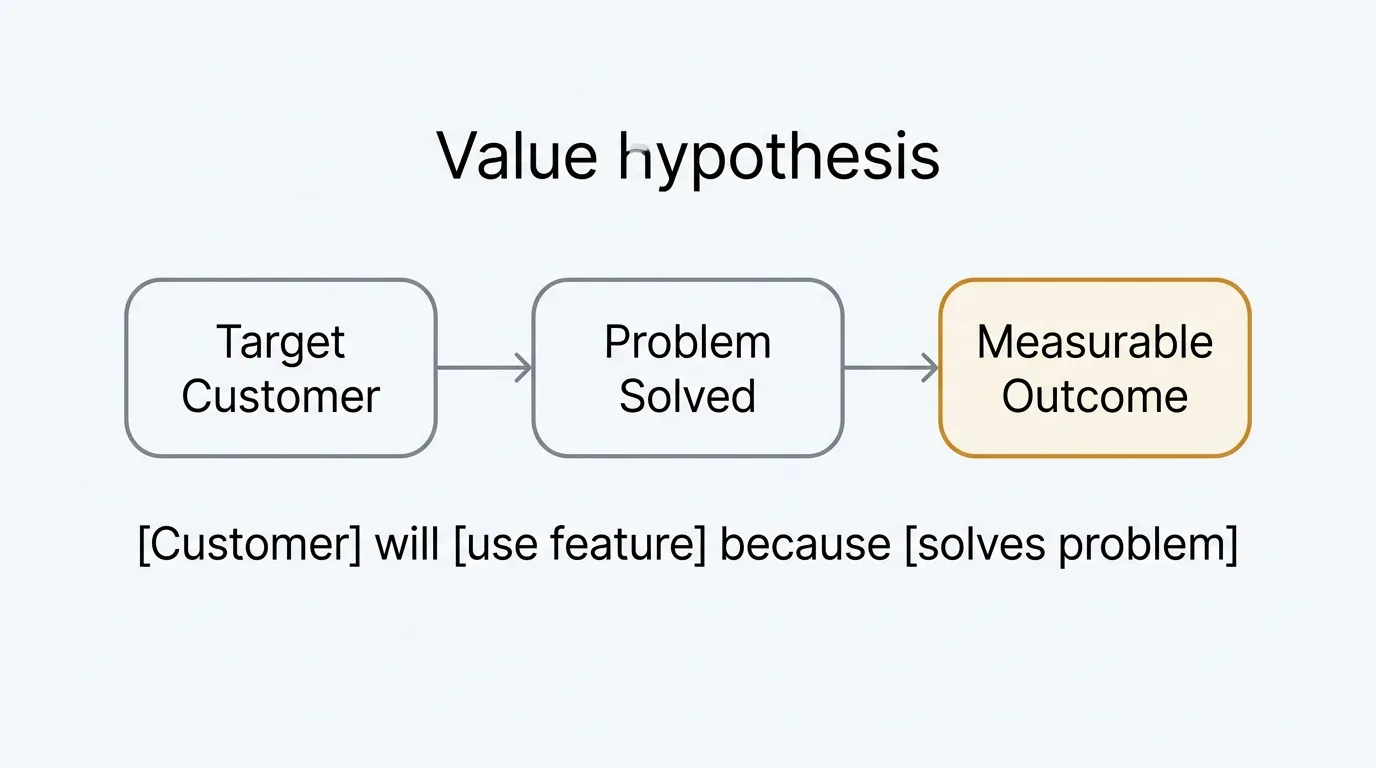

A value hypothesis has three components: a customer, a problem or job-to-be-done, and a claimed outcome. Without all three, it's too vague to test.

Value hypothesis vs growth hypothesis

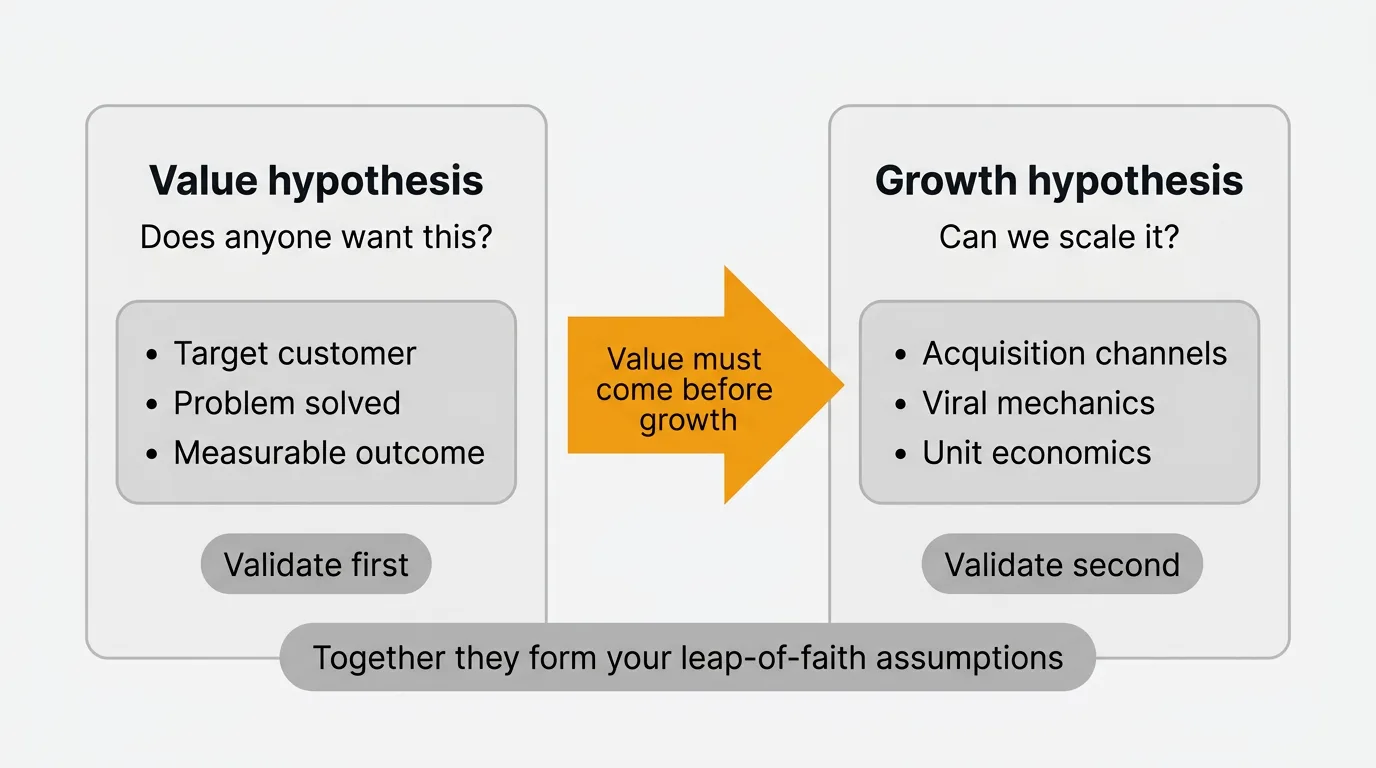

These two concepts are often confused, but they address different questions.

A value hypothesis asks: does anyone want this? Does the product solve a real problem for a real customer in a way they find valuable? This must be validated first.

A growth hypothesis asks: can we scale it? Can we find more customers like the ones who already value it, and can we acquire them sustainably? This is only worth testing once value is confirmed.

Trying to grow before validating value is one of the most expensive mistakes a product team can make. You scale a product nobody wants. You optimize acquisition channels before you've confirmed that the people you're acquiring actually get value from what you built.

Validate value first. Growth questions come second.

How to write a value hypothesis

A reliable formula for writing a value hypothesis is:

"[Target customer] will [use product/feature] because [it solves specific problem], resulting in [measurable outcome]."

This structure forces precision. You can't fill it out meaningfully without knowing who your customer is, what problem you're solving, and what success looks like for them.

Here are examples across different product types.

SaaS product (project management tool): "Freelance designers will use our time-tracking feature because tracking billable hours manually takes too long, resulting in more accurate invoices and fewer billing disputes with clients."

Marketplace: "Independent landlords will list properties on our platform because tenant screening currently requires contacting multiple services, resulting in a qualified tenant placed in under two weeks."

Developer tool: "Backend engineers at companies with multiple microservices will use our log aggregation API because debugging across distributed services is too slow with existing tools, resulting in a 50% reduction in mean time to resolution."

Consumer app: "First-time home buyers will use our mortgage comparison tool because comparing lender offers is confusing and time-consuming, resulting in a more confident decision in less than 30 minutes."

Each of these is falsifiable. You can design tests around them, measure the claimed outcome, and update the hypothesis when evidence contradicts it.

How to test your value hypothesis

Testing a value hypothesis does not require a finished product. Most of the best tests happen before you write a single line of code. Methods are ranked here roughly by effort.

Fake door tests

A fake door test presents a feature or product to real users before it exists. You add a button, a menu item, or a landing page CTA and measure how many people click through. The "door" leads nowhere — usually a message explaining that the feature is coming soon, or a waitlist form.

Fake door tests measure intent. Clicks are a much stronger signal than survey responses because they require action, not just opinion. A 15% click-through rate on a feature you haven't built yet is meaningful data. A 1% rate is a signal to reconsider.

The limitation is that fake door tests measure demand without confirming that the product actually delivers value. They're a first filter, not a final verdict.

Landing page and waitlist

Build a one-page description of the product and drive targeted traffic to it. Measure sign-up rate. The sign-up rate itself is a data point, but the more valuable output is the list of people who raised their hand. Talk to them.

A landing page validates that your value proposition resonates enough to prompt action. Combine it with an interview process and you get both a demand signal and qualitative insight into whether your framing matches how customers actually describe the problem.

Customer interviews

Interviews are the most direct way to test a value hypothesis. You talk to the customers you've hypothesized about, present the problem framing, and listen carefully to whether it resonates.

Good interview technique matters here. You're not pitching — you're listening. You want to hear them describe the problem in their own words, explain what they currently do about it, and react to your proposed solution. Confirmation bias is the main failure mode: you hear what you want to hear and stop probing.

See a future post on user interview questions for a structured approach to getting honest signal from customer conversations.

Feature voting

If you already have users, feature voting is one of the most efficient ways to test value hypotheses around specific capabilities. You describe a feature or improvement in plain language, publish it on your feedback board, and let users vote and comment.

Vote counts give you a demand signal. Comments give you qualitative context — users explain why they want the feature, which often reveals whether they're facing the problem you hypothesized or a different one entirely.

This approach validates demand before you commit engineering resources. It also creates a record you can refer back to when you're deciding how much confidence to assign to your estimates. A feature with 200 votes and 40 detailed comments is a much safer bet than one with three votes and no comments.

PMF survey

A product-market fit survey tests whether your product is delivering value at the level your hypothesis claims. The core question, from Sean Ellis, is: "How would you feel if you could no longer use this product?" Users who answer "very disappointed" are your engaged, value-confirmed segment.

If fewer than 40% of your respondents say "very disappointed," your value hypothesis likely needs refinement — either you're targeting the wrong customer or the product isn't solving the problem as effectively as you assumed.

See a future post on PMF survey methodology for question wording, sampling, and how to interpret the results.

Using feedback data to validate

Quantitative and qualitative feedback serve different roles in hypothesis validation.

Vote counts and engagement metrics act as demand signals. When a large share of your user base votes on a specific problem, that's evidence the problem is real and widespread. It adjusts your Confidence estimate upward when you're scoring work with a framework like RICE.

Qualitative feedback — comments, interview notes, open-ended survey responses — tells you whether your value framing is accurate. Users might be voting for something you described one way but wanting it for a completely different reason. That gap between your hypothesis and their actual motivation is critical information.

Quackback's feedback board surfaces both signals in one place. You see vote counts alongside the comments users leave, which means you can distinguish between "many people want this" and "many people want this for the reason I think they do." Those are different validations, and you need both.

The goal is not to accumulate feedback. It is to update your hypothesis based on what the feedback reveals.

What to do when your hypothesis is wrong

A failed value hypothesis is not a failed product. It is a signal to adjust your framing before investing more.

The first thing to check is whether you have the wrong customer. Often the problem is real, but you've been talking to the wrong segment. The people who actually experience the pain acutely are different from the ones you assumed.

The second thing to check is whether you have the wrong problem. Users might be experiencing friction in the area you hypothesized, but the specific pain point you identified isn't the one that matters most to them. Look at how users describe the problem in their own words. If their language doesn't match your hypothesis, update the hypothesis.

Pivot the value proposition, not the entire product. Most failed value hypotheses don't mean the product direction is wrong. They mean the framing was off, the target segment was too broad, or the claimed outcome didn't match what users actually care about.

Your feedback data is the fastest way to diagnose which of these is true. The comments users leave when they vote or submit feedback often contain the language you need to rewrite a sharper hypothesis.

Frequently asked questions

What's the difference between a value hypothesis and a value proposition?

A value hypothesis is an unvalidated assumption — it's what you believe to be true before you have evidence. A value proposition is the refined, validated version you communicate to customers once you have confirmation that the value is real. You test a value hypothesis to arrive at a value proposition.

How many value hypotheses should you test at once?

One or two at a time. Testing more than that spreads your attention too thin and makes it hard to isolate which variable is responsible for what you observe. Pick your most critical assumption, design the smallest test that could falsify it, and move on to the next once you have a clear result.

When should you abandon a value hypothesis?

When the evidence against it is consistent across multiple test methods and multiple customer segments. A single failed experiment isn't enough — test designs have flaws, and samples can be unrepresentative. If fake door tests, interviews, and a waitlist all point in the same direction, that's when you have enough signal to move on.

How specific does a value hypothesis need to be?

Specific enough to be falsifiable. If you can't describe a test that would prove it wrong, the hypothesis is too vague. "Users will find this useful" is not a hypothesis. "Mid-market operations teams will reduce manual reporting time by 30% using this feature" is one — it names a customer, describes an outcome, and implies a measurement.

Authored by James Morton

Founder of Quackback. Building open-source feedback tools.

Try Quackback

The open-source feedback platform. Boards, voting, and roadmaps.

Get startedStar on GitHub91The Monthly Quack

Monthly notes on feedback, roadmaps, and shipping what users actually ask for.