You need to know if users are satisfied. The problem is that most feedback mechanisms are slow, indirect, or noisy. CSAT surveys solve this. One focused question, sent at the right moment, tells you exactly how a user felt about a specific experience — in under five seconds.

The CSAT score is a rate, not a feeling. It is something you can track, segment, and act on. This guide explains how CSAT works, when to use it over NPS or CES, and gives you four copy-pasteable templates for the most common scenarios.

What is a CSAT survey

A CSAT (Customer Satisfaction Score) survey asks users how satisfied they were with a specific interaction, feature, or experience. The core question is:

"How satisfied are you with [product / support interaction / onboarding]?"

Users respond on a 1-5 scale, where 1 is very dissatisfied and 5 is very satisfied. Some teams use a 1-10 scale or even a three-point scale (unhappy, neutral, happy). The 1-5 scale is the most common and benchmarkable.

The formula:

CSAT = (number of satisfied responses / total responses) × 100

Satisfied responses are typically defined as scores of 4 or 5. If 80 out of 100 respondents gave a 4 or 5, your CSAT score is 80%.

CSAT is intentionally narrow. It measures a moment, not a relationship. That specificity is what makes it useful — you know exactly what the score refers to and when the experience happened. A CSAT survey sent immediately after a support ticket closes tells you whether that ticket was resolved well. It does not tell you whether the user will recommend you to a colleague next month. That is a different question.

CSAT vs NPS vs CES

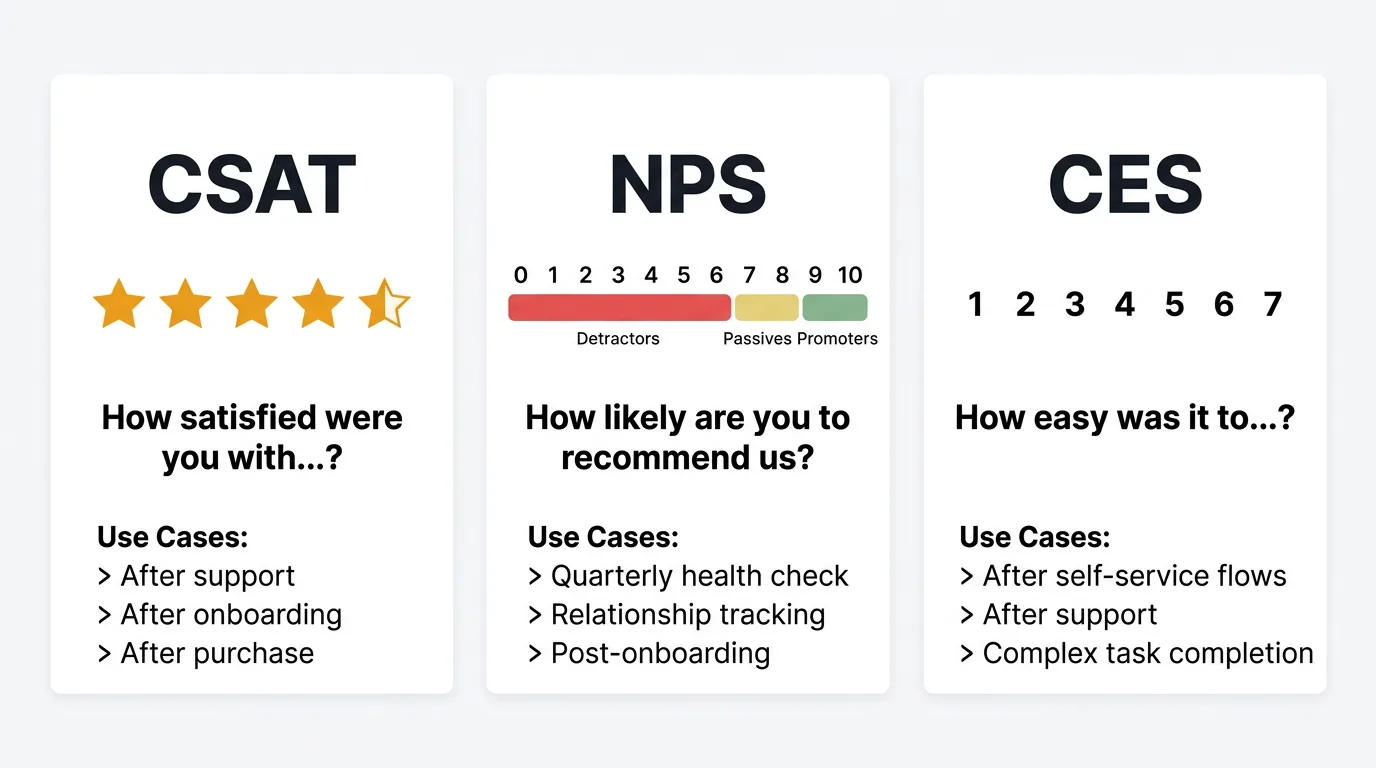

These three metrics are often conflated. They measure different things and belong at different points in the user journey.

| Metric | What it measures | Core question | Scale | When to use |

|---|---|---|---|---|

| CSAT | Satisfaction with a specific interaction | "How satisfied were you with...?" | 1-5 | After support, onboarding, feature use, purchase |

| NPS | Overall loyalty and likelihood to recommend | "How likely are you to recommend us?" | 0-10 | Quarterly, post-onboarding, relationship tracking |

| CES | Effort required to complete a task | "How easy was it to...?" | 1-7 | After self-service flows, support, complex tasks |

Use CSAT when you need a verdict on a specific touchpoint. Did the support agent resolve the issue? Was onboarding clear? Did the checkout flow work?

Use NPS when you want to understand overall loyalty and track it over time. See the NPS survey template guide for templates and scoring formulas.

Use CES when you suspect friction is the problem. If users are dropping off during a specific workflow, CES tells you whether the effort required is the cause.

Most teams benefit from running all three — but at different times and for different purposes. Running a CSAT survey every month and an NPS survey every quarter gives you both the granular interaction data and the long-run loyalty trend.

Core CSAT questions

The primary question

"How satisfied are you with [your support experience / your onboarding / [Product Name]]?"

Scale: 1 (Very dissatisfied) — 2 (Dissatisfied) — 3 (Neutral) — 4 (Satisfied) — 5 (Very satisfied)

Keep the label on both ends of the scale visible. Users should not have to guess which end is positive.

Follow-up questions

The primary question gives you the score. Follow-up questions tell you what to do with it. Use one or two follow-ups — not all of them.

"What was the main reason for your rating?" An open text field after the rating. This single question captures the most actionable qualitative signal you will collect. It is especially valuable for low scores (1-3) where the gap between "what went wrong" and "how to fix it" is widest.

"What could we have done better?" Works well after a low or neutral score. It is forward-looking, which makes it easier for users to answer than "what went wrong," which can feel accusatory.

"Was your issue fully resolved?" (Yes / No / Partially) Specific to post-support surveys. The CSAT score tells you whether the interaction felt good. This tells you whether the problem is actually gone.

"How would you rate the speed of our response?" (1-5) Useful if response time is a known pain point or SLA metric. Separates quality of resolution from speed of resolution.

"How likely are you to contact us again with a question?" (1-5) A proxy for confidence. If users are not sure they would reach out again, your support experience may feel unreliable even when the individual ticket resolved well.

"Which part of the experience could we improve?" (select all that apply) A multiple-choice follow-up makes it easy to respond quickly. Offer options like: Speed, Clarity of communication, Technical expertise, Friendliness, Resolution quality. Add an "Other" option with a text field.

"May we follow up with you about your experience?" (Yes / No) If a user gives a 1 or 2 and says yes, that is a direct invitation for a personal conversation. These conversations produce more insight than any survey.

CSAT survey templates

Post-support interaction

Send within two hours of a ticket being marked resolved. Response rates drop significantly after 24 hours.

Question 1: "How satisfied were you with the support you received today?" (1-5)

Question 2: "Was your issue fully resolved?" (Yes / No / Partially)

Question 3: "What could we have done better?" (open text, optional)

Keep this one short. Users have already spent time dealing with a problem. The shorter the survey, the higher the completion rate.

Post-onboarding

Send 3-5 days after a user completes the core onboarding flow. Early enough that the experience is fresh; late enough that they have had a chance to encounter the product on their own.

Question 1: "How satisfied are you with your onboarding experience?" (1-5)

Question 2: "Which part of the onboarding experience, if any, could be clearer?" (open text)

Question 3: "Did you feel confident using [Product] after completing setup?" (Yes / Somewhat / No)

Question 4: "What was most helpful during onboarding?" (open text, optional)

Onboarding CSAT is one of the earliest churn signals available. A score below 70% warrants a direct look at your onboarding flow and, if possible, a follow-up conversation with the lowest-scoring users.

Product experience (after using a feature)

Send after a user has meaningfully engaged with a specific feature — for example, after they export their first report, publish their first update, or complete a key workflow for the first time.

Question 1: "How satisfied are you with [Feature Name]?" (1-5)

Question 2: "Did [Feature Name] do what you expected?" (Yes / Mostly / No)

Question 3: "What would make [Feature Name] more useful for you?" (open text)

Feature-level CSAT helps you prioritize improvements. If a feature that drives activation has a CSAT below 60%, that is a higher-priority fix than a low score on a rarely-used edge case. Pair this with usage data to understand the relative weight of each feature's satisfaction score.

Post-purchase

Send within an hour of a completed purchase or plan upgrade. The experience of signing up and paying is a distinct step that often creates friction separate from the product itself.

Question 1: "How satisfied were you with the checkout and signup experience?" (1-5)

Question 2: "Was there anything that made the process harder than expected?" (open text)

Question 3: "How confident do you feel about the plan you chose?" (Very confident / Somewhat confident / Not sure)

Post-purchase CSAT isolates the commercial experience from the product experience. A user can love your product and still have had a frustrating upgrade process. Catching that frustration early gives you a chance to address it before it colors their view of everything else.

When to send CSAT surveys

Timing is as important as the questions themselves. A well-written survey sent at the wrong moment produces low-quality data.

After support ticket resolution. Send within two hours of closing the ticket. This is the highest-response-rate moment for post-support CSAT because the experience is immediate.

After onboarding completion. Not on day one. Day one is setup friction, not product satisfaction. Send after the user has completed the core flow and had a short time to work independently.

After key feature milestones. Trigger a survey the first time a user completes a meaningful action in a feature. This captures genuine first-use impressions without interrupting repeat usage.

After purchases and plan changes. Upgrades, downgrades, and new purchases all involve decision-making that users have opinions about. Survey within 60 minutes.

Avoid stacking surveys. If a user just completed an NPS survey, hold off on CSAT for at least two weeks. Survey fatigue is real and affects both response rates and the quality of answers. For more on collecting customer feedback without overwhelming users, including timing and channel strategies, see the full guide.

Mid-week, mid-morning for email. If you are distributing via email, Tuesday through Thursday between 9am and 11am local time consistently produces the highest open and response rates. Avoid Mondays and Fridays.

In-app at natural pause points. Show the survey after a task is completed, not during one. Interrupting an active workflow produces resentment, not useful data.

How to analyze CSAT data

Calculate your score

Apply the formula: (number of 4s and 5s / total responses) × 100. Your score is a percentage, not an average of the raw ratings. This matters because a 3 does not count as "half satisfied" — it counts as not satisfied.

Track this score over time. A weekly or monthly chart tells you whether specific changes — a new support process, an updated onboarding flow, a feature improvement — moved satisfaction in the direction you intended.

Segment by cohort and context

A single aggregate CSAT score hides meaningful differences. Segment your data by:

- Survey type — post-support scores behave differently from post-onboarding scores

- User plan or tier — enterprise users may have different expectations and experiences

- Feature — if you survey per feature, which features have the lowest scores?

- Time period — did scores change after a release or an outage?

Segmentation turns a number into a diagnosis. An aggregate CSAT of 75% tells you little. A CSAT of 75% overall but 52% for users on the free plan tells you something specific to investigate.

Track trends, not snapshots

A single survey cycle is a snapshot. The value of CSAT comes from consistency over time. Run surveys at regular intervals, use consistent question wording, and keep your scoring formula the same so that month-over-month comparisons are valid.

When a score drops sharply, look for what changed around that time. A score that declines gradually over several months often points to a product quality issue or growing competition. A sudden drop usually has a specific cause — a bad release, a support process failure, a pricing change.

Combine with qualitative feedback

The score tells you where to look. The open-text responses tell you what you are looking at. Route qualitative CSAT responses into your feedback board alongside feature requests and support insights. When five users with scores of 1 or 2 all describe the same problem, that is a pattern you can act on — not just a number to watch.

For more on the difference between measuring at scale and interpreting meaning, see the guide on qualitative vs quantitative feedback.

Improving your CSAT score

Close the feedback loop

Every user who gives a 1 or 2 and leaves a comment should receive a personal reply. Not a form response — a message that references what they said and explains what you are doing about it. This is the highest-leverage action you can take with CSAT data. Users who feel heard often revise their view before you have shipped a single fix.

For users who gave a 3, a brief check-in asking if there is anything you can help with goes a long way. The neutral group is often more recoverable than the dissatisfied group, and they represent a large share of your respondents.

Act on low scores systematically

Low CSAT scores are signals, not incidents. Build a process around them. When a ticket gets a 1 or 2, who sees that? What happens next? If the answer is "nothing, unless someone checks the dashboard," you are leaving the most actionable data you have untouched.

Route low-score alerts to the relevant team — support lead, product manager, or account manager — within the same business day. The longer the gap between the bad experience and the follow-up, the less likely you are to recover the relationship.

Follow up with dissatisfied users

Beyond the automated follow-up, invite users who consistently score low to a short conversation. These are your most informative users. They have tried your product, run into something that did not work, and told you about it. That is rare. Treat it as an opportunity, not a complaint to manage.

A 20-minute call with a dissatisfied user will surface more specific, actionable insight than 200 survey responses from satisfied ones. Satisfied users often have vague praise. Dissatisfied users usually know exactly what they want. Building this practice into your customer feedback loop ensures low CSAT scores consistently produce improvements rather than just reports.

Frequently asked questions

What is a good CSAT score?

For most SaaS products, a CSAT score above 75% is considered good. Above 85% is strong. Scores below 60% indicate a persistent problem that warrants immediate attention. Industry benchmarks vary significantly, so the most reliable comparison is your own historical trend — a score of 72% that is steadily improving tells a better story than a score of 80% in slow decline.

How many questions should a CSAT survey have?

Two to three questions is the right target for most contexts. The primary satisfaction question, one open-text follow-up, and optionally one structured follow-up (yes/no or multiple choice). Surveys longer than three questions see a noticeable drop in completion rate. Keep the survey short enough that a user can finish it in under a minute.

How is CSAT different from NPS?

CSAT measures satisfaction with a specific interaction at a specific point in time. NPS measures overall loyalty and the likelihood of recommending your product. CSAT is contextual and immediate. NPS is relational and longitudinal. Use CSAT after discrete interactions — support, onboarding, purchases. Use NPS to track the overall health of your relationship with users over time. See the NPS survey template for a detailed breakdown.

How often should I send CSAT surveys?

Send CSAT surveys as close to the relevant event as possible — not on a fixed schedule. After a support ticket resolves, send within two hours. After onboarding completes, send within a few days. The survey should be tied to an event, not a calendar. The exception is periodic product experience surveys, which you can run quarterly for users who have not recently triggered an event-based survey. Avoid sending to the same user more than once per month across all survey types combined.

Authored by James Morton

Founder of Quackback. Building open-source feedback tools.

Try Quackback

The open-source feedback platform. Boards, voting, and roadmaps.

Get startedStar on GitHub91The Monthly Quack

Monthly notes on feedback, roadmaps, and shipping what users actually ask for.