Product manager interviews test how you think, not what you know. The interviewer already knows the answer is not obvious — they want to see your framework, your trade-offs, and whether you can structure ambiguity into a clear decision.

This guide covers 20 questions across five categories, with frameworks for structuring your answers and examples of what strong responses look like. The questions are representative of what companies like Google, Meta, Amazon, Stripe, and growth-stage startups ask.

Product sense questions

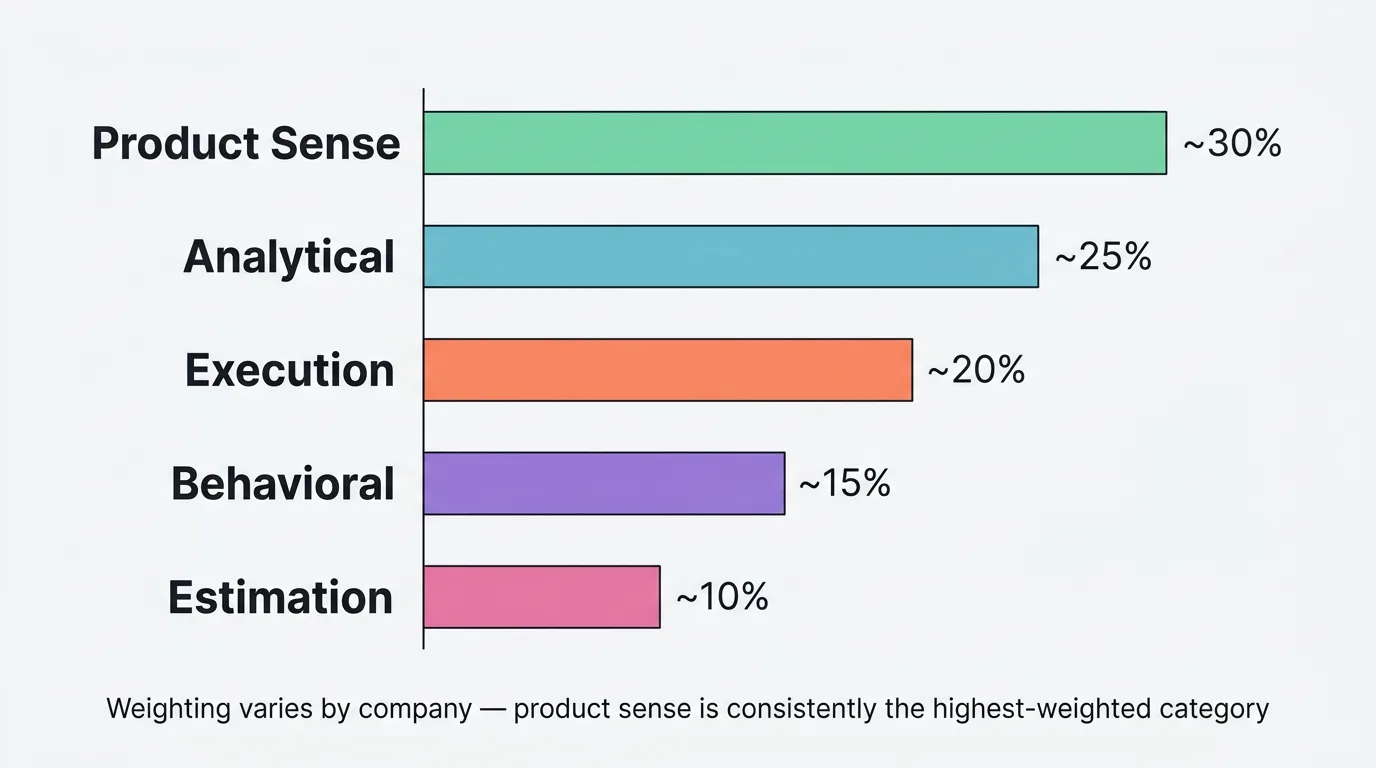

Product sense questions test your ability to think about users, define problems, and design solutions. They are typically the highest-weighted category in PM interviews.

1. "Design a product for elderly people to stay connected with their families."

What they are testing: Can you define a target user, identify their needs, and design a product that addresses those needs — not just list features?

Framework: Start by clarifying the user (age, tech comfort, living situation). Identify their core pain points (isolation, difficulty with technology, desire for connection). Brainstorm solutions that address those pain points. Evaluate and prioritize. Describe your recommendation with rationale.

Strong answer structure: "I would focus on elderly people living alone, aged 75+, with limited smartphone experience. Their primary pain point is feeling disconnected from grandchildren. I would design a simplified video calling device — a single-purpose tablet with one button that calls a pre-configured contact list. I would prioritize this over a general communication app because reducing friction is more important than adding features for this user. The Kano Model helps here — video calling is must-be functionality, while a simple hardware form factor is the delighter."

2. "How would you improve Instagram Stories?"

What they are testing: Can you analyze an existing product, identify opportunities for improvement, and prioritize them?

Framework: Clarify which user segment you are focusing on (creators, consumers, or advertisers). Identify current pain points through user behavior analysis. Generate improvement ideas. Evaluate using impact and effort. Recommend one or two changes with clear rationale.

Strong answer structure: Define the metric you are optimizing (engagement rate, time spent, creator retention). Identify the specific problem (e.g., creators with under 1,000 followers see low engagement on Stories, which discourages continued creation). Propose a solution (e.g., discovery features for Stories from small creators). Explain why this matters for the business (creator supply drives consumer engagement, which drives ad revenue).

3. "You are the PM for Spotify. A feature that lets users collaborate on playlists has been proposed. Would you build it?"

What they are testing: Can you evaluate a feature proposal critically instead of defaulting to "yes"?

Framework: Clarify the user need (do users actually want collaborative playlists?). Size the opportunity (how many users would use this?). Assess the effort (engineering, design, and maintenance cost). Evaluate against alternatives (what else could the team build with the same effort?). Make a recommendation.

Strong answer structure: Consider how you would validate demand before committing to build. "I would first check if this is a real user need by looking at feedback data and support requests. If collaborative playlists are a top-10 request by volume, the signal is strong. I would also analyze user behavior — are users already sharing playlists via links? That workaround pattern suggests unmet demand."

4. "Pick a product you use daily. What would you change about it?"

What they are testing: Do you think critically about products in your daily life? Can you articulate a problem clearly?

Framework: Choose a product you genuinely use and have opinions about. Identify one specific friction point. Explain why it matters. Propose a solution. Explain the trade-off.

Avoid: Generic answers about popular products. "I would make Google Search faster" says nothing. "Google Maps shows me restaurant hours but not current wait times — I would add real-time wait estimates using aggregated location data because 40% of my restaurant decisions happen at the moment I arrive" shows actual product thinking.

Analytical questions

5. "A key metric dropped 15% week-over-week. How do you investigate?"

What they are testing: Can you diagnose a data problem systematically?

Framework: Is it a data issue (tracking broke, reporting changed)? Is it seasonal or external (holiday, competitor launch, news event)? Is it a product issue (bug, UX regression, feature removal)? Is it a specific segment (one country, one device, one user cohort)?

Strong answer structure: Start broad, narrow down. "First, I would check if the metric definition or tracking changed. Then I would segment the drop — is it across all users or concentrated in a specific geography, device, or cohort? Then I would look at the product changelog — did anything ship that week? Finally, I would check external factors. The goal is to isolate the cause before proposing a fix."

6. "How would you measure the success of a new onboarding flow?"

What they are testing: Do you pick the right metrics and avoid vanity metrics?

Strong answer structure: "I would track three things. First, activation rate — the percentage of new users who complete the key action that correlates with retention (our north star metric input). Second, time-to-first-value — how long it takes to reach that action. Third, 30-day retention for the cohort that went through the new flow versus the old one. I would not optimize for onboarding completion rate alone because a user can complete onboarding without actually getting value from the product."

7. "What metrics would you track for a feedback tool?"

What they are testing: Can you identify metrics that capture both user value and business value?

Strong answer structure: "The north star metric would be monthly active feedback contributors — users who submit, vote, or comment. Input metrics: widget installation rate (breadth), submissions per active user (depth), weekly return rate (frequency), and time from installation to first submission (efficiency). Business metrics: conversion from free to paid, net revenue retention, and support ticket deflection rate. I would avoid vanity metrics like total registered users — what matters is active participation."

Execution questions

8. "You have five feature requests from five different enterprise customers. How do you prioritize?"

What they are testing: Can you make a principled prioritization decision under pressure?

Strong answer structure: "I would not prioritize by customer size alone. I would use a framework that balances demand breadth, strategic fit, and effort. Specifically, I would apply RICE scoring: Reach (how many customers beyond these five would benefit?), Impact (how much does this move our key metric?), Confidence (how sure are we about the impact?), and Effort (engineering weeks). I would also check our feedback board to see if any of these requests have votes from non-enterprise users — broad demand is a stronger signal than concentrated demand."

9. "How would you build a product roadmap for the next quarter?"

What they are testing: Can you connect strategy to execution?

Strong answer structure: "I would start with the team's strategic objectives for the quarter. Then I would inventory inputs: customer feedback data (top-voted requests by segment), technical debt flagged by engineering, competitive gaps, and metrics that need improvement. I would score candidates using RICE or a similar framework, then group them into themes. The roadmap would have two to three themes with clear outcomes, not a feature list. I would share it publicly so customers can see what is coming and provide early feedback."

10. "A stakeholder insists on building a feature you disagree with. What do you do?"

What they are testing: Can you navigate conflict without being either a pushover or a blocker?

Strong answer structure: "I would start by understanding their reasoning — what data or customer feedback drives their conviction? Often disagreements dissolve when both sides share their evidence. If we still disagree, I would propose a way to test the hypothesis cheaply — a prototype, a survey, or a Kano analysis to validate demand. If the data supports their position, I would change my mind. If it supports mine, I would share the data respectfully. The goal is decisions based on evidence, not authority."

Behavioral questions

11. "Tell me about a time you launched a product that failed."

What they are testing: Self-awareness, learning from failure, intellectual honesty.

Strong answer structure: Describe the product, what went wrong, and what you learned. Be specific about your role in the failure. Do not blame external factors. The best answers show that you identified the failure early, took action to mitigate it, and changed your approach going forward. "We skipped user research and built based on internal assumptions. The feature launched to minimal adoption. I learned to validate demand with customer feedback before committing engineering resources."

12. "Describe a time you had to make a decision with incomplete data."

What they are testing: Comfort with ambiguity, decision-making process.

Strong answer structure: Describe the decision, what data you had and what was missing, how you assessed the risk of being wrong, and what you did to reduce uncertainty. "I had usage data but no qualitative feedback. I ran five customer interviews in one week to fill the gap, made a decision based on the combined signal, and set a review point at 30 days to check if the decision held up."

13. "Tell me about a time you influenced without authority."

What they are testing: Collaboration skills, persuasion, cross-functional leadership.

Strong answer structure: Set the scene (who needed convincing, why they disagreed). Explain your approach (data, storytelling, prototyping). Describe the outcome. The best answers show that you changed someone's mind through evidence and empathy, not by escalating or going around them.

14. "How do you handle competing priorities from different teams?"

What they are testing: Stakeholder management, strategic thinking.

Strong answer structure: "I make the trade-offs visible. I create a shared view of all requests with their business impact, put them through the same prioritization framework, and let the data make the argument. When two requests have similar scores, I look at dependencies and sequencing — sometimes doing A first makes B easier. When there is no clear winner, I involve the stakeholders in the trade-off conversation directly. Transparency reduces politics."

Estimation questions

15. "How many Slack messages are sent per day globally?"

What they are testing: Structured thinking, reasonable assumptions, math under pressure.

Framework: Start with what you know. Break the problem into components. Make explicit assumptions. Calculate. Sanity check.

Strong answer structure: "Slack has roughly 47 million daily active users. The average knowledge worker probably sends 50-100 messages per day on Slack. Call it 75 as a middle estimate. Heavy users (engineers, support teams) might send 150+, but casual users send fewer. So: 47 million × 75 = roughly 3.5 billion messages per day." (Actual figure: Slack reports approximately 1.5 billion messages per day, so this estimate is in the right order of magnitude.)

16. "Estimate the revenue of Uber Eats in the US."

Framework: US population → people who use food delivery → order frequency → average order value → Uber Eats market share → take rate.

Strong answer structure: Work through each step explicitly. "330M US population. About 30% use food delivery apps, so 100M potential users. Maybe 40% are active monthly: 40M. Average 3 orders/month at $30/order = $90/month per user. 40M × $90 × 12 = $43B in total US food delivery GMV. Uber Eats has roughly 25% market share = ~$10.8B GMV. With a 15-20% take rate, revenue is roughly $1.6-2.2B annually."

How to prepare

Build frameworks, not scripts. Memorizing answers fails because interviewers ask variations. Understanding frameworks (RICE for prioritization, user-centric design for product sense, metric trees for analytics) lets you handle any variation.

Practice with real products. Pick three products you use daily. For each, identify one thing you would change, one metric you would track, and one feature you would cut. This builds the product intuition that interviews test.

Study the company's product. Use it. Read their changelog. Check their feedback board if they have one. Understanding their product shows genuine interest and lets you reference specific features in your answers.

Prepare two to three strong stories. Most behavioral questions can be answered with a well-prepared story about a launch, a failure, a conflict, or a data-driven decision. Rehearse these stories until you can tell them in under two minutes.

Frequently asked questions

How many rounds are in a PM interview?

Typically three to five: a recruiter screen, a hiring manager conversation, and two to three panel interviews covering product sense, analytical thinking, execution, and behavioral questions. Some companies add a case study or take-home assignment. The total process takes two to four weeks.

What is the most common PM interview mistake?

Jumping to a solution before defining the problem. In product sense questions, spend at least 30% of your time understanding the user and the problem before proposing solutions. In analytical questions, spend time defining the metric before analyzing the data.

Do I need a technical background for PM interviews?

Not usually, unless the role is specifically "Technical PM" or the company is engineering-led (Stripe, Linear, Vercel). Most PM interviews test product thinking, analytical reasoning, and communication skills. You should be able to discuss trade-offs with engineers, but you do not need to write code.

How do I answer "Why this company?"

Be specific. "I love your product" is generic. "I have been using your product for six months and noticed that your roadmap prioritization seems to weight enterprise requests heavily — I would bring a more balanced approach using structured feedback data from all segments" shows you have done your homework and have a point of view.

How important is the estimation question?

Less important than product sense and execution. Estimation questions test structured thinking and comfort with numbers, not mathematical precision. Being within an order of magnitude and showing a clear decomposition is sufficient. Do not spend disproportionate prep time on estimation at the expense of product sense practice.

Authored by James Morton

Founder of Quackback. Building open-source feedback tools.

Try Quackback

The open-source feedback platform. Boards, voting, and roadmaps.

Get startedStar on GitHub91The Monthly Quack

Monthly notes on feedback, roadmaps, and shipping what users actually ask for.