Two-thirds of new products fail within two years of launch. The most common cause is not bad engineering. It is a bad process — or no process at all.

A Columbia Business School study found that 66% of new products fail within two years. Products in the top 20% for differentiation have a 98% success rate, compared to 18.4% for the bottom 20%. The difference is not talent — it is how teams move from idea to shipped product.

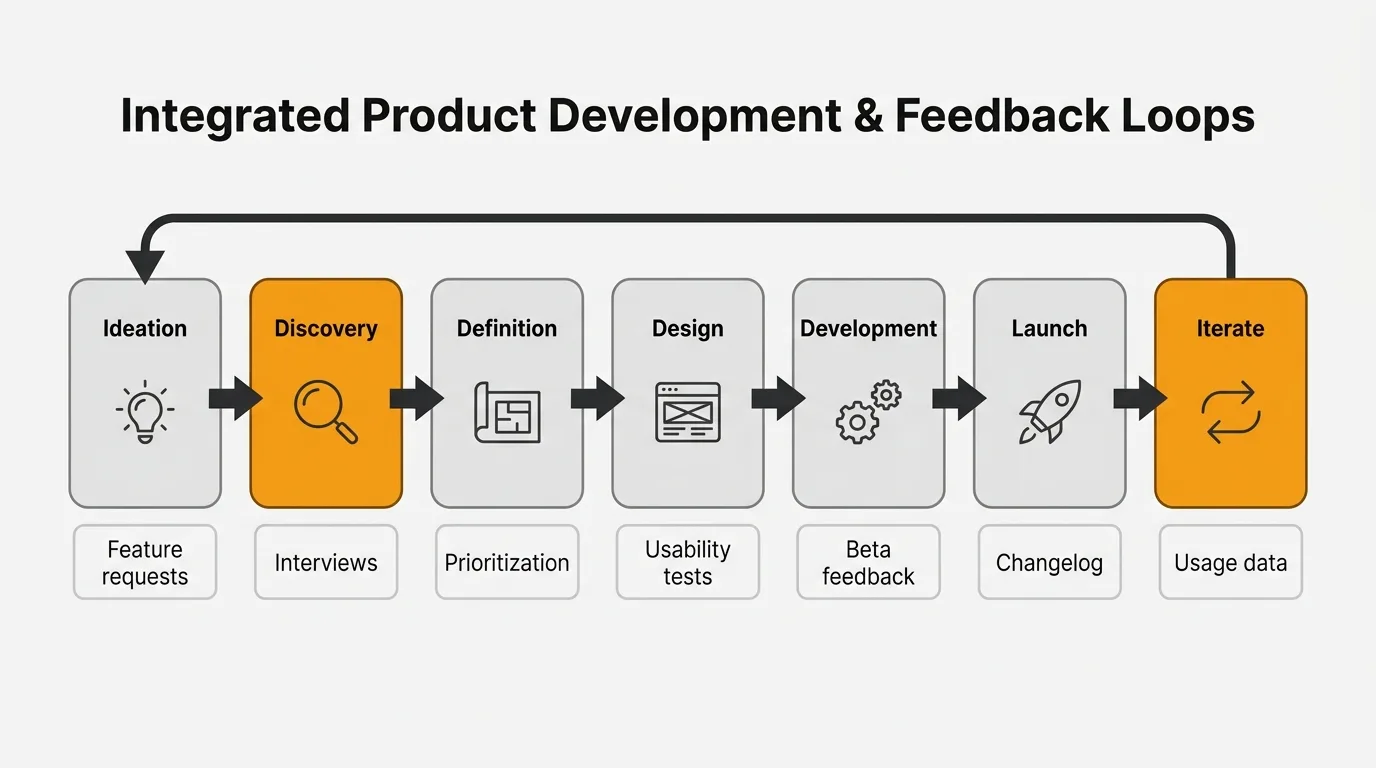

This guide covers the seven stages of product development, what happens at each stage, and where customer feedback fits in. If you are building a new product or adding major features to an existing one, this is the process that separates the 34% that succeed from the 66% that do not.

The seven stages

1. Ideation

What happens: You generate and capture ideas for what to build. Ideas come from customer feedback, market research, competitive analysis, team brainstorms, and strategic objectives.

Who is involved: Product managers, founders, customer-facing teams (support, sales, CS).

Key outputs: A list of candidate ideas with rough descriptions of the problem each one solves.

Where teams go wrong: Jumping to solutions before understanding the problem. "We should build a Slack integration" is a solution. "Our users lose feedback that comes through Slack" is a problem. Start with problems.

How feedback fits in: Feature request boards and voting are the highest-signal source of ideation input. They tell you not just what customers want, but how many customers want it. Support ticket analysis and sales call notes add context that pure vote counts miss.

2. Discovery and research

What happens: You validate that the problem is real, painful, and worth solving. You talk to customers, analyze usage data, study competitors, and size the opportunity.

Who is involved: Product managers, UX researchers, data analysts.

Key outputs: Problem validation, user personas, opportunity sizing, competitive landscape.

Where teams go wrong: Skipping this stage entirely. Research shows 93% of dedicated researchers do pre-design research, but only 55% of companies overall conduct any form of UX testing. The 45% that skip discovery are building on assumptions.

How feedback fits in: Customer interviews are the gold standard, but they do not scale. AI-powered feedback analysis can surface patterns across hundreds of feedback submissions, support tickets, and reviews — identifying which problems are most painful before you invest in interviews. For a deeper framework, see our guide on continuous discovery habits.

3. Definition and planning

What happens: You scope the solution. What are you building? What are you not building? How will you measure success? You write specs, prioritize features, and build a roadmap.

Who is involved: Product managers, engineering leads, design leads.

Key outputs: Product requirements, prioritized feature list, success metrics, timeline estimates.

Where teams go wrong: Over-specifying upfront. A detailed 50-page spec for a feature that has never been tested with users is waste. Define enough to start building, then learn from what you ship.

How feedback fits in: Prioritization frameworks like RICE and MoSCoW use customer demand as an input. Vote counts, request frequency, and customer segment data from your feedback tool inform which features make the cut. A prioritization matrix helps you weigh impact against effort using real data instead of gut feel.

4. Design and prototyping

What happens: Designers create wireframes, mockups, and interactive prototypes. The team tests concepts with users before writing production code.

Who is involved: Product designers, UX researchers, product managers.

Key outputs: Wireframes, prototypes, usability test results.

Where teams go wrong: Treating design as "making it pretty" instead of "making it work." Design is problem-solving. A beautiful interface that users cannot figure out is a failed design.

How feedback fits in: Early usability testing with prototypes catches fundamental problems that are expensive to fix later. Internal dogfooding — using the prototype yourself — catches obvious issues. But neither replaces testing with real users who do not know how the feature is supposed to work.

5. Development and testing

What happens: Engineering builds the feature in sprints or iterations. QA runs functional and regression tests. Beta programs put the feature in front of real users.

Who is involved: Engineers, QA, product managers, beta users.

Key outputs: Working software, test results, beta feedback.

Where teams go wrong: Treating "development complete" as "done." Code that passes tests is not the same as a feature that solves the user's problem. Beta programs bridge this gap by exposing the feature to real workflows before general release.

How feedback fits in: Beta feedback is the last chance to catch problems before they hit your full user base. A feedback widget embedded in the beta version makes it easy for testers to report issues in context, with screenshots and session details attached.

6. Launch

What happens: The feature ships to all users. Marketing, sales, support, and documentation are coordinated. The launch is communicated through changelogs, emails, in-app announcements, and social media.

Who is involved: Product, marketing, sales, support, documentation.

Key outputs: Release notes, changelog entries, support documentation, sales enablement materials.

Where teams go wrong: Under-investing in launch communication. Companies lose 33% of after-tax profit by shipping six months late, but only 3.5% from overspending 50% on development. Speed matters, but so does making sure customers know what you shipped and how to use it. A great feature that nobody discovers is a wasted feature.

How feedback fits in: Product update announcements close the feedback loop. When you ship something a customer requested, telling them directly converts a one-time requester into a long-term advocate.

7. Analyze and iterate

What happens: You measure how the feature performs against success metrics. You track adoption, usage patterns, satisfaction, and business impact. Learnings feed back into the next cycle.

Who is involved: Product managers, data analysts, customer-facing teams.

Key outputs: Performance dashboards, iteration priorities, learnings for the next cycle.

Where teams go wrong: Moving on to the next feature without analyzing how the current one performed. If you do not measure, you do not learn. If you do not learn, you repeat the same mistakes.

How feedback fits in: Post-launch feedback is the richest signal for what to build next. Feature-specific feedback, NPS changes, and support ticket patterns tell you whether the feature solved the problem or created new ones. This data feeds directly back into Stage 1, creating a continuous cycle.

Choosing a framework

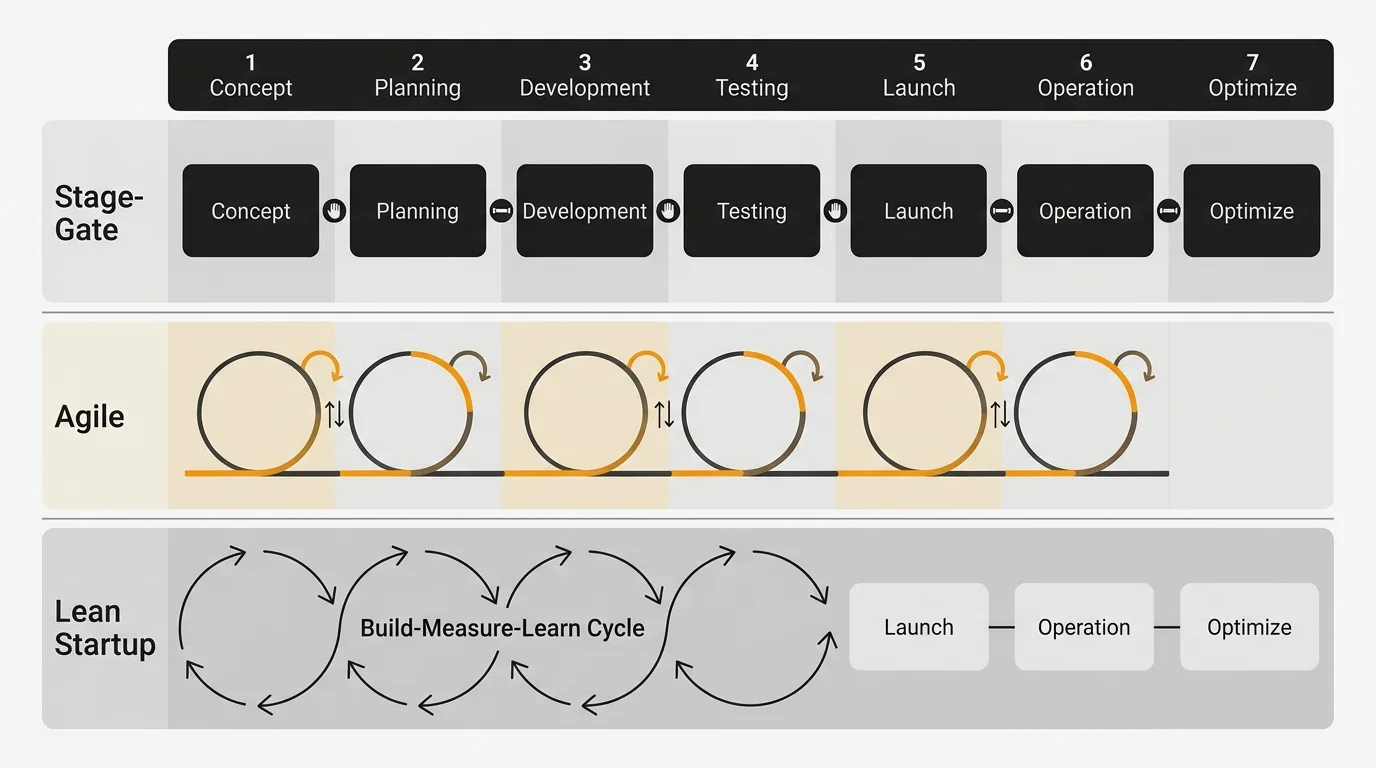

The seven stages above are framework-agnostic. How you execute each stage depends on which methodology fits your team and context.

Stage-Gate

Sequential phases separated by approval checkpoints ("gates"). Each gate requires specific deliverables before the project advances. Best for hardware, regulated industries, and organizations that need formal governance. Risk is controlled upfront through detailed planning.

Agile

Iterative sprints (typically two weeks) that ship increments of working software. Requirements evolve based on what you learn each sprint. Best for software teams with changing requirements. Risk is managed by shipping early and adapting continuously.

Lean Startup

Build-Measure-Learn loops focused on testing hypotheses with minimum viable products. Best for early-stage products and unvalidated markets. Risk is managed by testing cheaply before investing heavily. See our guide on value hypotheses for the foundation of this approach.

Hybrid (most common)

In practice, most teams blend these approaches. Research found that 100% of physical product firms identified as "best practice" use Agile sprints between traditional Stage-Gate phases. The frameworks are not mutually exclusive.

B2B vs B2C differences

The process is the same, but the execution differs.

| Dimension | B2B | B2C |

|---|---|---|

| Feedback collection | Direct relationships, smaller sample, deeper insight | Aggregated at scale, surveys, analytics, A/B tests |

| Validation | Pilots, POCs, design partnerships with key accounts | A/B tests, soft launches, feature flags |

| Release cadence | Slower, with migration concerns and customer training | Faster, driven by competitive pressure |

| UX tolerance | Users tolerate clunky UX if it solves workflow problems | Users abandon immediately if UX is poor |

| Sales cycle | 6-24 months, 6-10 decision-makers | Minutes to days, individual decision |

For B2B teams, the feedback connection is especially strong. With fewer customers and higher value per account, losing even one customer to a missed feature request is expensive. Structured feedback management is not optional — it is the primary input to your product development process.

Frequently asked questions

How long does product development take?

Software products typically take 3 to 9 months from ideation to launch. Consumer goods average around 13 months. The median for digital products is 18 months. Speed matters more than budget — companies lose 33% of after-tax profit by shipping 6 months late.

What is the most important stage?

Discovery and research. Products with well-executed predevelopment activities had a 75% success rate compared to 31% without. A study found that 72% of failed products ignored customer feedback during development. Skipping discovery to "move fast" is the most expensive shortcut in product development.

Should we use Agile or Waterfall?

Most modern software teams use Agile or a hybrid approach. Pure waterfall (completing each stage fully before moving to the next) is rare in software because it assumes you can predict requirements upfront. Agile's iterative approach handles uncertainty better. That said, some stages (like discovery and definition) benefit from completing them before starting development.

How does customer feedback fit into product development?

Feedback should inform every stage: ideation (what to build), discovery (validating the problem), definition (prioritizing features), development (beta testing), launch (closing the loop), and iteration (measuring success). The 72% failure rate for products that ignore feedback is the strongest argument for making feedback a core input, not an afterthought.

What are the biggest reasons products fail?

The top reasons: solving a problem nobody has (lack of market need), poor timing, ignoring customer feedback, over-engineering the first version, and underinvesting in go-to-market. Most of these are process failures, not engineering failures — they happen in the first three stages, not the build stage.

Authored by James Morton

Founder of Quackback. Building open-source feedback tools.

Try Quackback

The open-source feedback platform. Boards, voting, and roadmaps.

Get startedStar on GitHub91The Monthly Quack

Monthly notes on feedback, roadmaps, and shipping what users actually ask for.