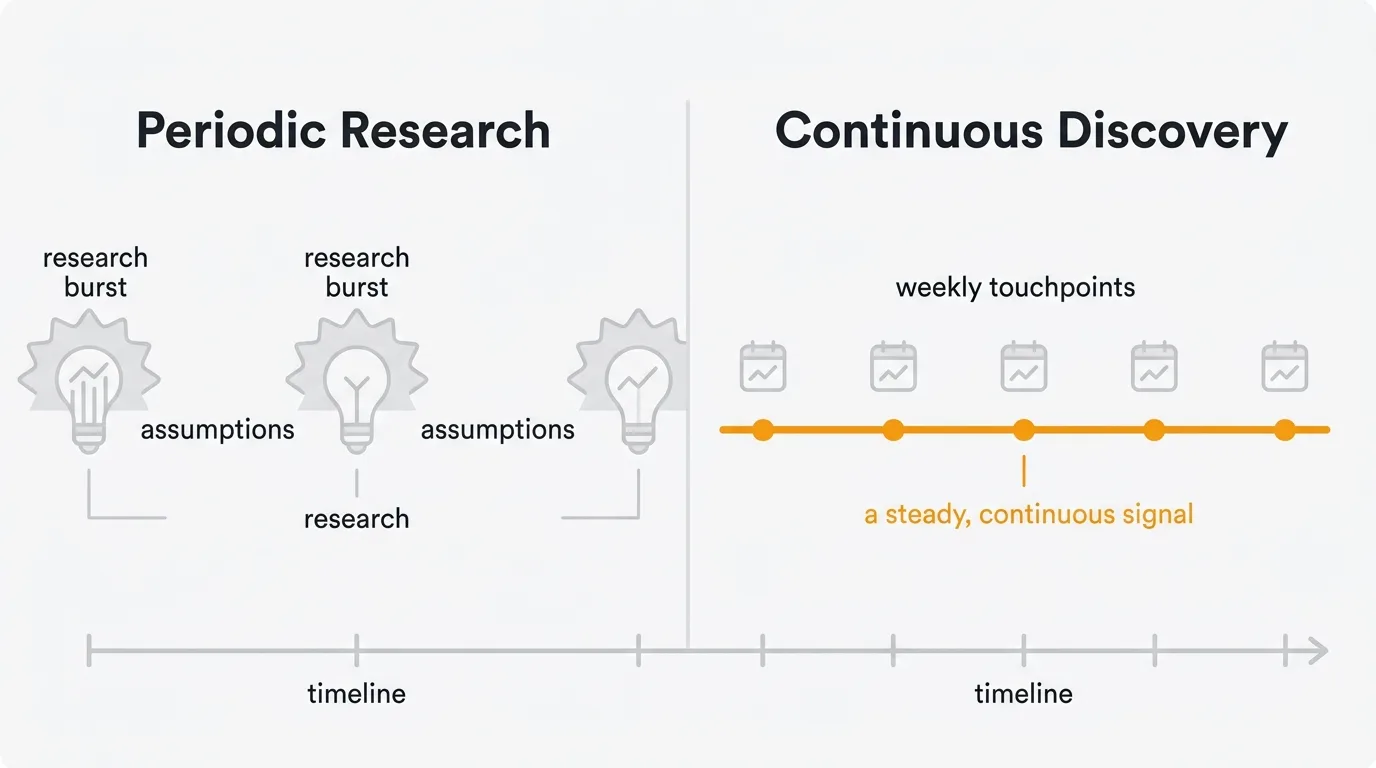

Continuous discovery is the practice of maintaining regular contact with users throughout the product development cycle — not just during research sprints. Teams that do it build better products because they make decisions with fresh signal, not stale assumptions.

Most product teams know they should talk to users more often. The problem is not awareness. It is habit. Research gets scheduled in bursts: a round of interviews before a big project kicks off, a survey after a launch goes sideways, a usability test when a designer insists. Between those bursts, the team operates on assumptions. And assumptions decay fast.

The continuous discovery framework offers a different approach. Instead of treating user research as a project, you treat it as a practice. Something you do every week, not every quarter. The result is a steady stream of insight that keeps your team grounded in real user problems instead of internal narratives about what users want.

What is continuous discovery

Continuous discovery is a framework popularized by Teresa Torres in her book Continuous Discovery Habits. The core idea is straightforward: product teams should engage with users at least once a week, every week, throughout the product development process.

Torres defines the practice around six key habits:

- Outcome-oriented — Start with a clear desired outcome, not a feature idea

- Customer-driven — Talk to customers weekly, not quarterly

- Collaborative — The product trio (PM, designer, engineer) discovers together

- Visual — Map opportunities with the opportunity solution tree

- Experimental — Test assumptions before building

- Continuous — Discovery and delivery run in parallel, not in sequence

This differs from periodic research sprints in several important ways:

Frequency over depth. A 30-minute conversation every week gives you more useful signal than a two-week research sprint every quarter. Weekly contact means you catch changes in user behavior and needs as they happen, not months after the fact.

Embedded in the team. In periodic research, a researcher goes away, conducts studies, and returns with a report. In continuous discovery, the product trio — product manager, designer, and engineer — participates directly. The people making decisions are the same people hearing from users.

Connected to current work. Each week's conversations are tied to the problems and opportunities the team is actively working on. You are not building a general library of insights. You are answering specific questions about specific decisions you need to make this week.

Lightweight by design. Continuous discovery does not require a research lab or a formal recruitment process. It works with short interviews, quick prototype tests, feedback board analysis, and automated surveys. The bar for "enough" is low enough to sustain weekly.

The shift is cultural. Teams that practice continuous discovery stop treating user insight as a phase and start treating it as an input that is always available.

The opportunity solution tree

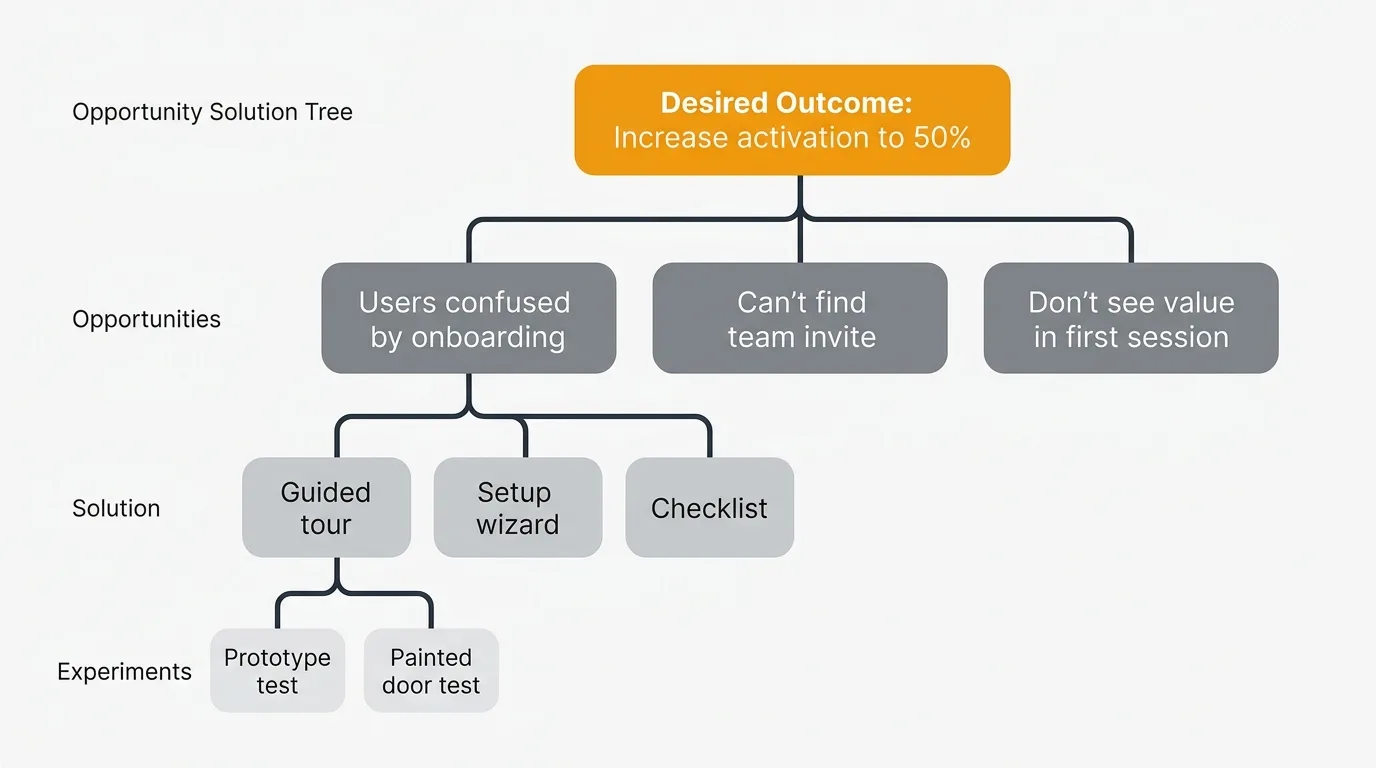

The opportunity solution tree (OST) is the core framework within continuous discovery for connecting what you learn from users to what you build. Teresa Torres introduced it as a way to structure the messy space between a desired outcome and the solutions you ship.

The tree has four levels:

Desired outcome. This is the measurable result your team is trying to achieve. "Increase activation rate from 30% to 50%" or "Reduce time to first value from 3 days to 1 hour." It comes from your product strategy, not from users. Users don't set your outcomes — they reveal the opportunities that help you reach them.

Opportunities. These are unmet needs, pain points, and desires you discover through user contact. "Users struggle to understand which features matter during onboarding." "Users can't find how to invite their team." Each opportunity is a problem space, not a solution.

Torres distinguishes three types of opportunities:

- Pain points — things that frustrate users about their current workflow ("I spend 2 hours per week copying feedback from Slack into a spreadsheet")

- Needs — things users want but don't have ("I need to see which features our enterprise users care about most")

- Desires — things that would make users' lives better even if they haven't articulated them ("I wish my feedback tool would auto-suggest which requests to merge")

Structure opportunities as a hierarchy. A top-level opportunity like "users struggle to prioritize feature requests" breaks down into sub-opportunities: "can't see demand across multiple channels," "no way to weight requests by customer value," "team disagrees on what matters." Each sub-opportunity is more specific and more actionable.

Solutions. For each opportunity, you brainstorm multiple possible solutions. The tree structure forces you to consider alternatives instead of jumping to the first idea. "Guided onboarding tour," "personalized setup wizard," and "interactive checklist" are three different solutions to the same opportunity.

The rule of thumb: generate at least three solutions for every opportunity before evaluating any of them. This prevents anchoring on the first idea and increases the odds of finding a better approach. Use "How might we..." prompts to expand the solution space.

Experiments. For each promising solution, you design a small experiment to test whether it actually addresses the opportunity. This might be a prototype test, a painted-door test, a concierge test, or a small-scale A/B test. The goal is to learn fast before committing engineering resources.

Here are four experiment types, ranked by effort:

| Experiment | Effort | What it tests | Example |

|---|---|---|---|

| One-question survey | 1 hour | Demand/interest | "Would you use X if we built it?" on your feedback board |

| Painted door test | 1 day | Real demand (not stated) | Add a button for the feature that tracks clicks but shows "coming soon" |

| Prototype test | 2-3 days | Usability and value | Clickable Figma prototype tested with 5 users |

| Concierge test | 1 week | End-to-end value | Manually deliver the solution to 10 users and measure outcomes |

The opportunity solution tree keeps your team honest. It prevents the common failure mode where someone has an idea, the team builds it, and nobody checks whether it actually solves a user problem. By mapping ideas back through opportunities to outcomes, every feature has a clear reason to exist.

How to build the continuous discovery habit

Knowing the framework is the easy part. Building the habit is where most teams stall. Here is a practical approach to making continuous discovery stick, broken into a 12-week adoption plan.

Week 1-4: Establish weekly user contact

Schedule weekly user touchpoints. Block 30-60 minutes per week for your product trio to talk to a user, watch a session replay, or review recent feedback. Put it on the calendar. Treat it like a standup — it happens every week regardless of what else is going on. The consistency matters more than the format.

Torres recommends "interview snapshots" — brief summaries of each user conversation captured in a consistent format. Use this template after every touchpoint:

- Date and participant: Who did you talk to? What segment are they in?

- Key quote: One verbatim statement that captures their core need

- Opportunity identified: What unmet need or pain point did you discover?

- Surprise: What did you learn that you did not expect?

- Next action: What should the team do with this insight?

Keep each snapshot to 5 lines or fewer. The constraint forces clarity. Store them in a shared document or channel where the entire trio can reference them during planning.

Build a participant pipeline. The most common reason teams stop doing weekly interviews is recruitment friction. Solve this upfront. Add an in-app prompt that asks "Would you be open to a 20-minute chat about your experience?" Offer a small incentive (gift card, early access). Target 10-15 signups per month. You only need one per week.

Week 5-8: Automate always-on feedback

Automate feedback collection. You cannot sustain weekly discovery if every touchpoint requires manual recruitment and scheduling. Set up always-on feedback channels: a feature request board where users submit and vote on ideas, an in-app feedback widget that captures context automatically, and integrations that pull feedback from support tickets and sales calls into one place.

Review feedback data in product reviews. Make feedback analysis a standing agenda item in your weekly product review or planning session. Look at new feature requests, vote count changes, and recurring themes. This turns raw feedback into decision-ready input. You don't need to interview a user every week if you have a steady stream of structured feedback to analyze.

Here is a sample weekly discovery agenda (30 minutes):

- Review new feedback (10 min) — Scan new submissions and votes on your feedback board. Flag anything that maps to current opportunities.

- Share interview snapshot (5 min) — One team member presents their touchpoint from this week.

- Update the OST (10 min) — Add new opportunities, reassess existing ones, decide which solutions to test next.

- Plan next touchpoint (5 min) — Who are we talking to next week? What do we need to learn?

Week 9-12: Connect discovery to delivery

Connect insights to your roadmap. Every insight should lead somewhere. When you identify an opportunity worth pursuing, add it to your opportunity solution tree. When a solution passes its experiment, move it to your roadmap. When you ship it, close the loop by notifying the users who requested it. The feedback-to-roadmap pipeline is what makes discovery actionable instead of academic.

Start small and expand. If your team currently does zero user contact between quarterly research sprints, don't try to implement the full framework in week one. The 12-week plan above builds gradually: weekly conversations first, then automated feedback, then structured opportunity mapping. Build the habit before you build the system.

By week 12, your team should have: a populated opportunity solution tree, a steady stream of at least 4 user touchpoints per month, an active feedback board with vote data, and a clear connection between what users tell you and what shows up on your roadmap.

Assumption testing

Before building anything, continuous discovery teams identify and test their riskiest assumptions. Every solution carries assumptions about desirability (do users want this?), usability (can users figure it out?), feasibility (can we build it?), and viability (does it work for the business?).

Torres recommends this process:

1. List assumptions for each solution. For a "feedback board with AI-powered triage" solution, assumptions might include: users will submit feedback through the board (desirability), users can find and use the voting mechanism (usability), the AI can accurately categorize feedback (feasibility), and reducing manual triage saves enough time to justify the cost (viability).

2. Map assumptions on a risk matrix. Plot each assumption on two axes: how certain you are it is true (low to high) and how critical it is to the solution's success (low to high). Test the assumptions in the upper-left quadrant first — high criticality, low certainty. Those are your "leap of faith" assumptions.

3. Design the smallest possible test. You do not need to build the full feature to test an assumption. A one-question survey can test desirability. A paper prototype can test usability. A technical spike can test feasibility. A spreadsheet model can test viability.

4. Set a pass/fail criterion before you run the test. "If 60% of interviewed users say they would use this feature at least weekly, the desirability assumption passes." Without a pre-set criterion, you will rationalize any result as positive.

Outputs vs outcomes

Continuous discovery requires a fundamental mindset shift about what "progress" means. Most product teams measure progress in outputs: features shipped, story points completed, releases deployed. Continuous discovery reframes progress around outcomes: user problems solved, activation rates improved, churn reduced.

The distinction matters operationally. An output-focused team asks "what can we ship this sprint?" An outcome-focused team asks "what user problem should we solve this sprint, and what is the smallest thing we can build to test whether our solution works?"

Here is a concrete comparison:

| Output-focused team | Outcome-focused team | |

|---|---|---|

| Sprint goal | "Ship the Slack integration" | "Reduce manual feedback collection by 50%" |

| Success metric | Feature deployed to production | Users spend 50% less time on manual collection |

| If it fails | "We shipped it, success" | "The integration shipped but only reduced time by 10% — we need to iterate" |

| Next action | Move to next feature on the list | Investigate why the outcome was not reached, test a different approach |

Output thinking leads to feature factories. The team ships constantly but the product doesn't get meaningfully better. Users accumulate features they did not ask for and still lack the ones they need. The roadmap becomes a feature list driven by internal ideas rather than user evidence.

Outcome thinking leads to deliberate product development. The team ships less frequently but each release is grounded in a real user need validated through discovery. Features are smaller and more targeted because they were designed to solve a specific problem, not to fill a sprint.

This is where feedback data becomes strategic. Vote counts on feature requests are a proxy for outcome demand. When 300 users vote for "Slack notifications when a teammate comments," that is not just a feature request — it is evidence of an unmet collaboration need. An outcome-focused team uses that signal to explore the opportunity ("users need real-time awareness of team activity") before committing to a specific solution.

Dual-track agile: running discovery and delivery in parallel

A common objection to continuous discovery is that it slows down delivery. The opposite is true when you structure it correctly using dual-track agile.

The discovery track and delivery track run in parallel:

- Discovery track (product trio): interviewing users, mapping opportunities, designing experiments, and validating solutions. This produces validated opportunities and tested solution concepts.

- Delivery track (full team): building, testing, and shipping the solutions that passed through discovery. This produces working software.

The tracks operate on different cadences. Discovery runs weekly — new interview, new insights, new experiment results. Delivery runs in sprints or continuous flow. The output of discovery (validated solution concepts) feeds the input of delivery (the sprint backlog).

This means the team is never idle waiting for research. Engineers build solutions that discovery already validated while the product trio discovers and tests the next set of opportunities. The result is higher shipping velocity with fewer wasted features.

Tools for continuous discovery

Continuous discovery works best when you have infrastructure that captures user signal without requiring constant manual effort. You need tools that keep the feedback channel open between formal interviews.

Feedback boards are the backbone of always-on discovery. They give users a place to submit feature requests, describe pain points, and vote on what matters most. Unlike interviews (which capture one user's perspective at a time), a feedback board accumulates signal from your entire user base continuously. Over weeks and months, patterns emerge that no single interview could reveal.

Voting quantifies demand. When users vote on feature requests, you get a rough but useful measure of how many people share a given need. This is not a substitute for qualitative understanding — you still need to understand why users want something — but it gives you a starting point for prioritization that is grounded in real demand rather than assumptions.

A public roadmap shows what you are doing about it. Discovery without action erodes trust. When users see their feedback reflected in your roadmap, they keep providing it. When feedback disappears into a void, they stop. A public roadmap closes the feedback loop between "we heard you" and "here is what we are building."

An in-app widget captures context. Scheduled interviews give you depth. An in-app feedback widget gives you breadth. When a user hits a frustration point, they can submit feedback in the moment — with the page URL, their account context, and their emotional state captured automatically. This is signal you would never get in a scheduled interview because users forget the specifics by the time they sit down to talk.

Quackback automates the always-on feedback channel that continuous discovery depends on. Feature request boards capture ongoing signal between interviews. AI-powered duplicate detection and merge suggestions keep your feedback organized as volume grows. Vote counts surface demand patterns. And automatic notifications tell users when their request ships, closing the loop without manual follow-up. For teams practicing continuous discovery, it replaces the spreadsheet-and-Slack approach with a structured system that scales.

Product operations and continuous discovery

As organizations scale, the challenge of continuous discovery shifts from "how does one team do it" to "how do multiple teams do it consistently." This is where product operations comes in.

Product ops teams create the systems and processes that make discovery scalable. They standardize how feedback is collected across teams. They build shared repositories of insights so that one team's discovery benefits others. They ensure that feedback from sales, support, and customer success reaches the product teams that need it.

In practice, product ops supports continuous discovery by:

- Centralizing feedback infrastructure. One feedback board, one source of truth for feature requests, shared across all product teams. This prevents the common problem of three teams collecting feedback in three different tools with no cross-visibility.

- Automating feedback routing. When a support ticket contains a feature request, it should automatically surface in the relevant product team's feedback pipeline. When a sales call reveals a deal-blocking gap, that signal should reach the team responsible for that area.

- Reporting on discovery health. Product ops can track whether teams are maintaining weekly user contact, how much feedback is being collected, and whether insights are being connected to roadmap decisions. These metrics keep continuous discovery from quietly fading after the initial enthusiasm wears off.

Track these discovery health metrics monthly:

| Metric | Target | Why it matters |

|---|---|---|

| Weekly user touchpoints per team | At least 1 | Minimum viable discovery |

| Interview snapshots created | 4+ per month | Evidence the team is capturing insights |

| New opportunities identified | 2-3 per month | Discovery is producing actionable input |

| Assumptions tested | 2+ per month | Team is validating before building |

| Feedback submissions per week | Growing or stable | Users trust the feedback channel |

| Time from feedback to roadmap | Under 30 days | Discovery is connected to delivery |

For product ops teams managing feedback at scale, tools that aggregate signal across boards, tag requests by product area, and surface trends automatically reduce the operational burden of keeping discovery running across an entire product organization.

Frequently asked questions

How is continuous discovery different from agile user research?

Agile user research typically happens within sprint cycles — test a prototype during the sprint, incorporate findings into the next sprint. Continuous discovery is broader. It includes research but also encompasses ongoing feedback collection, opportunity mapping, and assumption testing. The key difference is that continuous discovery is tied to a structured framework (the opportunity solution tree) that connects every piece of user contact to a desired outcome.

What if our users will not talk to us every week?

You don't need the same users every week. Rotate through different segments, different use cases, different stages of the customer lifecycle. You also don't need a formal interview every week. Reviewing feedback board submissions, analyzing support tickets, or watching session replays all count as user contact. The goal is fresh signal, not a specific format.

Build a participant pipeline that produces more signups than you need. Add an opt-in prompt in your product ("Would you be open to a 20-minute call about your experience?"), include it in onboarding emails, and ask support to flag willing participants. Most teams find that 10% of active users will opt in when asked directly. For a product with 500 active users, that is 50 potential participants — more than enough for one per week.

How do you balance continuous discovery with shipping speed?

Discovery and delivery are not competing activities. They run in parallel. While engineers build solutions validated in previous weeks, the product trio is discovering and testing the next set of opportunities. The dual-track approach (discovery track and delivery track running simultaneously) means discovery does not slow down shipping. It makes shipping more targeted.

Do you need a dedicated researcher for continuous discovery?

No. Continuous discovery is designed to be practiced by the product trio — product manager, designer, and tech lead — not outsourced to a research team. A dedicated researcher can add depth, help with method selection, and coach teams on interview technique. But the core habit of weekly user contact should belong to the people making product decisions, not a separate function.

How does continuous discovery connect to product strategy?

Your product strategy sets the desired outcomes that sit at the top of your opportunity solution tree. Discovery fills in everything below — the opportunities, solutions, and experiments. Without a strategy, discovery produces interesting insights with no clear direction. Without discovery, strategy produces bets based on assumptions instead of evidence. The two practices reinforce each other.

What is the minimum viable version of continuous discovery?

One 20-minute user conversation per week, captured in an interview snapshot, shared with the product trio. That is the floor. Add a feedback board to collect signal between conversations, and you have the two core inputs — qualitative interviews and quantitative vote data — that drive every other element of the framework.

Authored by James Morton

Founder of Quackback. Building open-source feedback tools.

Try Quackback

The open-source feedback platform. Boards, voting, and roadmaps.

Get startedStar on GitHub91The Monthly Quack

Monthly notes on feedback, roadmaps, and shipping what users actually ask for.